In the winter of 1978, Time magazine ran a special issue heralding the arrival of what it called the “miracle chip” — a tiny slice of etched silicon packed with enough computing power to, the editors of that issue promised, change the world.

The writers at Time’s writers described the chip using superlatives: “Unlike the hulking Calibans of vacuum tubes and tangled wires from which it evolved, [the chip] is cheap, easy to mass-produce, fast, infinitely versatile and convenient.”

“Just as the Industrial Revolution took over an immense range of tasks from men’s muscles and enormously expanded productivity, so the [silicon chip-powered] microcomputer is rapidly assuming huge burdens of drudgery from the human brain,” the writers cooed.

The issue sketched out a tantalizing future filled with mechanical marvels such as supermarket scanners and interactive TVs – both of which have indeed come to pass – quoted sociologists who believed the invention would lead to something akin to Athenian democracy, and informed the reader that, using the chip, a company was actively developing, wonder of wonders, a “portable phone that weighs less than two pounds and has no cord.”

AN ‘OVERNIGHT’ REVOLUTION 20 YEARS IN THE MAKING

The editors of Time were attempting to play the role of prophets, but they were several years behind the times. By 1978, the silicon microchip had been around for almost two decades, the patent for the first integrated chip having been issued in 1959.

The big breakthrough of the late ‘70s, and the one to which the editors of Time were correctly attuned, was that computer manufacturers could now base the core design of a working, portable computer around a chip— or, more properly, a small group of interconnected chips.

This innovation allowed them to construct and mass-produce the first “micro” computers able to perform useful, if mundane, clerical work, including word processing and generating simple spreadsheets. The first such microcomputer to really take off, the Apple II, had been launched in 1977.

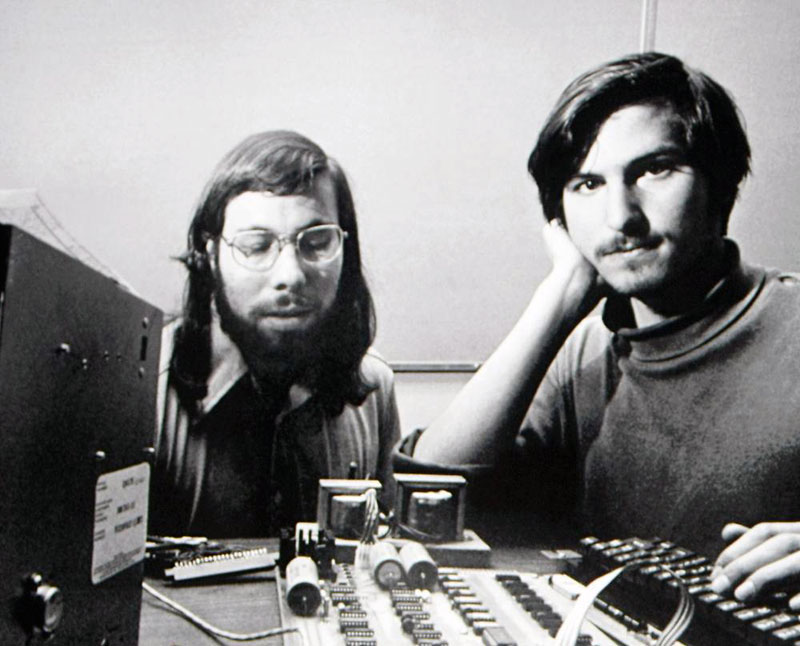

Steve Jobs and Steve Wozniak built the first Apple computers in their garages. The beginning of one of today’s preeminent high-tech companies was, somewhat paradoxically, a decidedly low-tech affair.

Above: Steve Wozniak and Steve Jobs with an early Apple computer prototype in the mid-1970s

We would also note that Bill Gates was merely a bright, upper-middle-class college sophomore when he founded Microsoft in 1975. The author once saw him inhale his dinner in his college dorm room, sitting alone, in under three minutes! Gates was unusual in that he had gained experience in computer programming while in high school, but was otherwise a novice to standard American business practices.

The founders of Apple and Microsoft had more in common with eccentric hobbyists than they did with the titans of corporate America. But they had two legs up on the existing hardware companies when it came to the obsessiveness with which they followed technological innovations, which the established experts regarded as interesting but relatively unimportant. To wit, the exponentially improving speed of microchips and the ability to connect small networks of chips to form microcomputers.

Their second leg up was their radically futuristic and democratic attitude. In Jobs and Wozniak’s case, the two young entrepreneurs believed the company they were starting had inherently political and ‘liberationist’ possibilities contained in its design plans for new personal computers for the ‘everyman’ and ‘everywoman’. The ‘pirates’ of Silicon Valley didn’t just want to build a better mousetrap – they wanted to democratize the economy!

The dominant player in computing in 1978, IBM, considered the microcomputer to be little more than a toy and not any kind of serious business threat. IBM wouldn’t come out with a microcomputer of its own for another three years, and when it did, it wasn’t very good.

The founders of Apple and Microsoft clearly knew some things the then-reigning corporate hierarchs did not.

2025 VERSUS 1975: THE UBERMENSCH STRIKES BACK

Herein lies the biggest difference between the microchip revolution of the late 1970s and the AI “revolution” we are currently undergoing: this time, there is no underlying “power to the people” ethos to America’s latest technological movement. In contrast to the heady early days of microchips and home computers during the 1980s, the present AI breakthrough moment feels more like the ‘big guys’ are shoving what they want down our throats rather than empowering us with the gift of a new technology.

Hence the title of this week’s post: “The AI Revolution versus the Chip Revolution: Six Reasons We’re Not Having Fun This Time.”

For example, Google searches now generate their own little AI summaries, whether we users like it or not. These AI searches serve to undermine actual visits to other websites (disrupting business models), and are wasteful, given that a standard Google search takes maybe a third of a watt-hour of electricity, while an AI search can sometimes use the power equivalent to a microwave oven running for an hour.

Pew Research finds that only 11% of US adults are more “excited than concerned” about the increased use of AI in daily life, and nearly half (43%) expect the latest AI breakthroughs, and AI more generally, to personally harm them. In contrast to the public euphoria over the advent of the personal computer, why does artificial intelligence scare us more than excite us?

WILLINGLY ADOPT AI – OR ELSE

One obvious reason is job security. Already, over the last two years, AI has helped to eliminate about a quarter of lower-level, strictly coding-related computer programming jobs in the United States (the number of higher-level software development jobs has held more or less steady).

But the unease goes much deeper than concern for our jobs. Even if we think AI will not be replacing us at work, many of us know AI will be “assisting” us — whether we like it or not. If our managers see AI as improving our productivity, then we will simply have to use it, even for tasks we basically enjoy doing ourselves.

This ‘performance-enhancing drug’ will soon be mandatory across the entire corporate sphere: A Harris poll shows that nearly three-quarters of CEOs fear for their jobs if they don’t show AI-driven business gains over the next two years.

In 1975, pocket calculators, built with early microchips, put the last U.S. slide rule manufacturer out of business. Soon, personal computers would be putting a lot of the pocket calculator makers out of business.

But why does it feel like AI is already busy putting little pieces of our brains out of business?

Maybe this goes back to when we started relying on Spellcheck, or maybe when we stopped looking at road maps and started just plugging addresses into our GPSs to go anywhere? When was that – 2007? 2010? Can we even remember?

To those of us over 40, it feels like we used to have a bevy of practical skills that somehow became obsolete, very quickly, over the past decade and a half.

Will AI ‘level the proverbial playing field’? Will being smart and creative still count for something when machines are also smart and creative? Will dumb people be passing us career-wise only because they are more adept at “cheating” with AI? These aren’t small questions. The answers will be consequential and could very well be terrifying. Or they could be far more mundane than we currently believe.

ARTIFICIAL INTELLIGENCE AND US: THE VIEW FROM 10,000 FEET

When assessing the threat AI poses to our jobs, our sanity, and to the world at large, there are six important points to keep in mind:

1. Everybody will be trying to figure out how AI can Make Them a Star.

Students have already begun using AI if it can help them get good grades, even if it means that they learn less.[i] They are, of course, meant to be cultivating the neural networks of their brains, not those of their ChatGPTs—the loss in skills learned when students use AI to help them write has been measured. As the science writer James Gleick puts it, “Using ChatGPT to write your term papers is like bringing a robot to the gym to lift weights for you.”

Legal associates will soon be using AI to help them write legal memos and briefs, even if, on occasion, the AI cites cases entirely invented from whole cloth. Musicians will be using AI to fine-tune hits and make their melodies burrow even deeper into our ears. AI will be viewed as a necessary evil for ‘keeping up with the Jones’s’. Occasionally, it will dazzle, amaze, and even be a little fun.

2. AI, In Its Current Form, Isn’t All That New.

By 1978, computers had been changing the world for over three decades. The Time magazine “Miracle Chip” issue of February 1978 pointed out that if it weren’t for computers, the amount of “manpower” needed to run the nation’s telephone switchboards in 1978 would equal “the entire U.S. female population between 18 and 45.”

Similarly, the kind of multidimensional vector database (MVD) that powers the new “talking” large language models (LLMs) has been assisting us for a very long time – for over 20 years, in fact. The Google search engine, for example, has long depended on this kind of array information boiled down to raw numbers.

As one AI expert put it, all that the new LLMs are doing is just “glorified old-fashioned regression on the fly.” The LLM knows nothing, understands nothing—it is just coming up with a likely next word that will fit into a sentence. The technology powering the latest artificial intelligence breakthroughs is not something completely new or revolutionary. AI is simply leveraging a set of pre-existing MVD models to achieve new effects.

That being said, the way “language” suddenly emerged from ChatGPT scared a lot of people, including some of its builders. One engineer said that when ChatGPT really “spoke” for the first time, in fall 2022, he felt like he was meeting an extraterrestrial.

3. AI is Almost Entirely Big Tech, but Small Firms Will Have a Role to Play Inventing Niche Products.

The LLMs require huge data farms of central processors in order to function properly. To do the calculations necessary to build a serviceable LLM, a tech company typically needs something like 6,000 GPUs running for 12 days.

Since there is a proven correlation between model size and truthfulness, bigger is perceived as better, even when smaller models sometimes outperform the models built by the tech behemoths through better design and training. High performing models built by China’s DeepSeek, for example, which can solve math problems better than the average math PhD holder, are relatively small.

It’s already clear that a slew of smaller businesses, some using openly-sourced LLMs, will soon be producing successful products to rival, or at least complement, the LLMs designed by the larger tech firms.

Unfortunately, some of these smaller companies are doing despicable things like turning “nudify” into an actual thing and a new English word, as they train their models on the millions of pictures of naked women available on the Internet. Add a picture of someone you want to see undressed, and out it comes.

4. At Least for Now, We Are Done with Dramatic AI Breakthroughs, But You Wouldn’t Know That From All the Hype.

Big tech talks about being on the verge of “artificial general intelligence” and “super intelligence.” The real AI breakthrough came back in 2012, when it was shown that, when powered by powerful parallel processors (“parallel” means they can pursue numerous calculations at once — computing power developed to drive graphics in video games), artificial neural networks could finally live up to a well-established potential for machine learning.

In 2012, a “deep learning” system based on neuro-nets called AlexNet was the runaway winner in ImageNet, a high-profile image classification competition. Neural networks would soon become virtually synonymous with AI.

It is important to clarify that AIs, at their present stage of development, have no visualization skills and no coherent understanding of the world. When fed reams of information on planetary motion and Kepler’s laws, for example, they can’t work back and deduce Newton’s laws of motion. Humans spend a lot of time finetuning and “training” AI responses to cover up for this lack of any unified understanding of the world.

Meta’s Mark Zuckerberg is currently talking about his ambition to invent “superintelligence.” This talk is silly. Computers have been ‘super intelligent’ at many tasks for a long time. They can beat us at chess, at Go, at Jeopardy, and a very simple neural network made of 800 “neurons”—roughly the number found in a jellyfish (leading the bot to be called Jellyfish)—has been beating us at Backgammon since the 1990s.

You’ll hear about the enormous size of the latest AI systems (ChatGPT4 is said to have 1.76 trillion parameters—that is, associations between its “tokens,” which here are words), but bulking up the LLMs will not lead to dramatic changes in performance.

Instead, notable improvements will come from fine-tuning, secondary training, and adding additional AI “agents” that are very good at specific tasks. They will also be “distilling” the essences of other AI models to incorporate into their own. The best AIs will be like the very finest whiskeys — careful blends of abilities that go down easy.

5. The Tech Barons Are All After the Same Thing: The One, Universal AI app that None of Us can Live Without.

The tech barons are not conducting extremely expensive AI experiments to discover “artificial general intelligence,” or AGI, the way CERN, i.e. the European Council for Nuclear Research, was created in the early 1950s to discover the Higgs boson.

They are conducting huge AI experiments for a much more self-interested reason: they are trying to develop the one universal AI app that people will find indispensable and, ideally, will be part of all the indispensable systems currently on your computer: operating system, web browser, search engine, the works.

The way cars now have power steering and power brakes, computers will soon have AI-powered everything.

6. The Times We Live in Are Contributing to Making AI Scary.

Back in 1978, the editors of Time did come up with a few troubling scenarios that might crop up in a world powered by the microchip.

“Sophisticated computers in the wrong hands could begin subverting society,” they speculated. Or the literal-mindedness of computers might lead to some unintended consequence, such as a computer system accidentally launching a nuclear strike in response to its receiving data that it interpreted as the start of a Soviet nuclear attack (does anyone remember the 1983 movie ‘War Games’?).

Another possibility was that the U.S. federal government would eventually come to possess “one immense, interconnecting computer system: Big Brother.”

People worry that AI will destroy democracy. Some argue social media is already well on its way to doing just that. Undoubtedly, AI has qualities that appeal to people with clear authoritarian tendencies: AI can centralize and monitor; it is unsurpassed at the mechanisms of surveillance and policing. It can also be made to spew its own version of reality and praise someone like Adolf Hitler, as we recently saw with Elon Musk’s Grok.

There is also the science fiction-tinged promise of “uploading” your intelligence into a machine so that you can if you are, say, a dictator, rule forever. We suspect some authoritarians are drawn to AI because they have such big egos that they actually believe this promise to be true.

OpenAI chairman Sam Altman and friends have found a warm welcome at the current White House. President Trump killed a Biden Administration executive order trying to throw a few reasonable constraints on AI, such as labeling and watermarking AI-generated content, as soon as he got into office. This early Trump Administration decision was part and parcel of the populists’ strategic embrace of the top barons and their newfound influence on the federal bureaucracy, and a trend we have covered previously in this newsletter.

In March 2025, Amazon announced it would be training its Alexa virtual assistant on everything its millions of users were saying to it.

None of this is to say that AI can’t also help society.

When you get down to it, AI should be able to democratize professional services as it gives access to virtual experts when the real experts are not in, are too expensive, or are simply not that good at what they do.

At the same time, Mark Zuckerberg envisions a future when eighty percent of our friends are virtual companions. Is this any more pathetic than having eighty percent of your friends be cats or dogs?

Should we fear AI? Not necessarily.

Should we fear what the tech-barons and the current political oligarchs want to do with it?

Probably, yes.

Robert Hill Cox (RCH)

July 27, 2025

Robert Cox is a novelist and short story writer residing in New York City. Mr. Cox worked as a financial writer and editor in the banking and insurance industries for 30 years.

************************

PREVIOUS GREYMANTLE ARTICLES BY ROBERT COX: